How purist should our taxonomy of compilers be?

“Is it really a compiler, though?”

We certainly aren’t the first in the lab automation industry to think about automated protocol generation as compilation. We stand on the shoulders of giants like Synthace with Antha. Yet, the question comes up a lot. Sometimes phrased as genuine curiosity and sometimes as polite skepticism: “Aren’t compiler engineers supposed to be low level? “You are using ‘compiler’ as an analogy, right?” “Do you mean transpiler?” “Don’t we already have compilers for liquid handlers between the SDKs and the firmware?”

Somewhere along the way, “compiler” became culturally synonymous with “thing that turns general purpose programming language into unreadable machine code” rather than the broader functionally-defined scope of systematic translation between levels of abstraction while preserving semantics.

I’ve grown to appreciate these questions because they reveal a lot about how we collectively think about what compilation means. They present a perfect opportunity to discuss my favorite topics and I think we can learn so much from parallel domains who have already solved similar problems to those lab automation faces.

The taxonomy of compilers

Hank Green recently published a set of videos talking about paraphyletic and polypheletic groups, where he argued that the term “fish” doesn’t have any concrete definition. In evolutionary biology, a paraphyletic group is one we define by taking a common ancestor and, for practical, intuitive reasons, excluding some of its descendants, while a polyphyletic group is one we define by grouping organisms together for similar reasons despite the absence of a shared recent ancestor. As soon as you start putting explicit scientific bounds on the definition of a fish, it starts to include things that intuitively we feel are not fish or conversely exclude things that we intuitively feel must be fish. If you aren’t careful, Green argues that you might have accidentally counted humans as fish and ruled out bass.

But of course we keep using the word fish. It’s still useful and we don’t even notice how we can’t explain exactly what we mean. Everyone knows what a fish is until you try to define ‘fish’ precisely.

I think compilers and interpreters are similar.

I love this ‘fishy’ framing of compilers because it highlights how tricky definitions can be. Much of early biology was observing and classifying and categorizing ambiguous relationships, working with natural language feels similar. It’s perfect in a very meta-way that the definition of a compiler is so ambiguous, because our whole company is about taking fuzzy language and making it concrete, about deciding what language is useful and what language is overwhelming and unnecessary.

The core concept of compilers relates to how we define someone’s intent and if we can separate it from the details. What details do we care about and when do we care about them? It’s not a bad thing that ‘fish’ or ‘compiler’ are not precise technical terms, it’s part of the beauty of natural language that we can use them to draw associations and parallels implicitly without having to be precise – that we can express intent at a high level and still convey meaning.

My attempt at the absolutely necessary compiler elements are 1) a concrete intent model and 2) a mapping to an execution model. Unlike our fuzzy, flexible natural language, this input model cannot be ambiguous because we can’t define systematic rules for transformation without defining exactly what it’s acting on. While the input syntax must have one precise meaning, there may be many valid implementations for it. This is the point of the input model: allowing the user to express themselves while avoiding thinking about the massive execution space. I don’t care what kind of transport was used to ship my package, I just care about defining its destination.

In domain specific languages, we often call this ‘declarative programming’, although this term is also fairly muddled. Even C, the designated ‘low-level’ language, is a declarative language in some sense. You don’t specify which CPU register holds which value or in what order instructions execute – the compiler figures that out. ’High level’ and ‘abstract’ are relative and by nature of having an input language compiled to an output language you have an intent model and an execution model.

As a consequence of the space of semantically correct implementations usually being enormous, the goal of optimization naturally emerges. If I’ve declaratively stated what I want, I should be able to assume I’ll get it. “Correctness” should be taken for granted and “quality” of execution is the measure of a compiler. Here again we model the user’s domain and can devise metrics they care about to evaluate trade-offs in execution. For example, a compiled program might be fast but use too much memory or alternatively compact but slow.

Once you see compilation in this intent-to-execution framework, you start noticing it everywhere and that’s when the taxonomy gets tricky.

Every machine needs inputs, every computerized process has an interface. Domain specific languages are ubiquitous, and even the line between an internal DSL and an everyday API is sometimes blurry (Fowler, 2010). We use words like translator, planner, optimizer, rewriter, compiler, interpreter, transpiler, engine, slicer, code generator, but what we are always doing is parsing some structured input, transforming it through intermediate representations, and generating output that means the same thing but at a different level of abstraction.

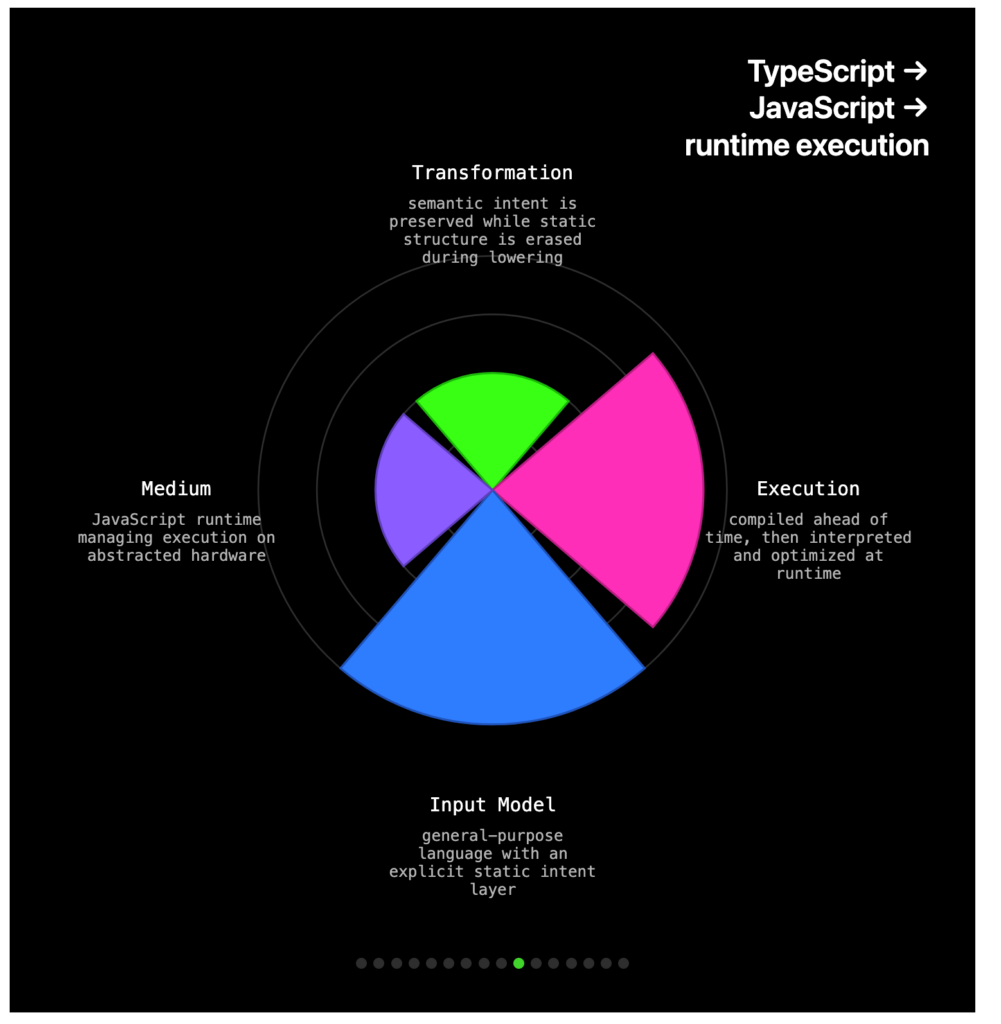

If I were to try and make one of those purist-rebel alignment charts for compilers I think the options for axes would be:

Transformation:

Purist: converted and highly optimized in an execution space

Neutral: lowering of abstraction level

Rebel: any semantic preserving translation

Execution:

Purist: compiled to a separate executable representation

Neutral: interpreted directly into execution

Rebel: continuously interpreted commands allowing side effects

Input model:

Purist: general purpose language

Neutral: formal textual syntax

Rebel: any structured artifact

Medium:

Purist: execution on a physical machine

Neutral: execution on virtual / abstract machine

Rebel: any system state

Note since the point of this blog is to be very liberal with how we define ‘compiler’ we are using the terms interpreter and compiler interchangeably.

By this alignment chart we are solidly a compiler. Our only axis that we get downranked in is Input Model as our DSLs aren’t general-purpose languages.

So we are building a compiler then – so what

When we call what we’re building a compiler, we aren’t being pedantic, we are making a claim about the knowledge and techniques we are using to build it. Compiler terminology gives us access to engineering practices refined over decades, lessons like correctness comes before optimization, intermediate representations let you separate concerns and support multiple backends, cost models should be exposed to users, not hidden in heuristics, and declarative languages enable usability and broader optimization than imperative ones. Lab automation can apply these lessons directly instead of rediscovering them. There’s more to it than just technical take-aways. If you want to hear about some of the normative, institutional lessons I think we can learn from parallel fields, you can read my blog on the history of cultural resistance to compilers.